|

Di seguito gli interventi pubblicati in questa sezione, in ordine cronologico.

The instability of large, complex societies is a predictable phenomenon, according to a new mathematical model that explores the emergence of early human societies via warfare. Capturing hundreds of years of human history, the model reveals the dynamical nature of societies, which can be difficult to uncover in archaeological data.

The research, led Sergey Gavrilets, associate director for scientific activities at the National Institute for Mathematical and Biological Synthesis and a professor at the University of Tennessee-Knoxville, is published in the first issue of the new journal Cliodynamics: The Journal of Theoretical and Mathematical History, the first academic journal dedicated to research from the emerging science of theoretical history and mathematics.

The numerical model focuses on both size and complexity of emerging "polities" or states as well as their longevity and settlement patterns as a result of warfare. A number of factors were measured, but unexpectedly, the largest effect on the results was due to just two factors the scaling of a state's power to the probability of winning a conflict and a leader's average time in power. According to the model, the stability of large, complex polities is strongly promoted if the outcomes of conflicts are mostly determined by the polities' wealth or power, if there exist well-defined and accepted means of succession, and if control mechanisms within polities are internally specialized. The results also showed that polities experience what the authors call "chiefly cycles" or rapid cycles of growth and collapse due to warfare.

The wealthiest of polities does not necessarily win a conflict, however. There are many other factors besides wealth that can affect the outcome of a conflict, the authors write. The model also suggests that the rapid collapse of a polity can occur even without environmental disturbances, such as drought or overpopulation.

By using a mathematical model, the researchers were able to capture the dynamical processes that cause chiefdoms, states and empires to emerge, persist and collapse at the scale of decades to centuries.

"In the last several decades, mathematical models have been traditionally important in the physical, life and economic sciences, but now they are also becoming important for explaining historical data," said Gavrilets. "Our model provides theoretical support for the view that cultural, demographic and ecological conditions can predict the emergence and dynamics of complex societies."

Source: EurekAlert

The world has waited with bated breath for three decades, and now finally a group of academics, engineers, and math geeks have finally found the magic number. That number is 20, and it's the maximum number of moves it takes to solve a Rubik's Cube.

Known as "God's Number", the magic number required about 35 CPU-years and a good deal of man-hours to solve. Why? Because there's 43,252,003,274,489,856,000 possible positions of the cube, and the computer algorithm that finally cracked God's Algorithm had to solve them all. (The terms "God's Number/Algorithm are derived from the fact that if God was solving a Cube, he/she/it would always do it in the most efficient way possible.)

A full breakdown of the history of God's Number as well as a full breakdown of the math is available here, but summarily the team broke the possible positions down into sets, then drastically cut the number of possible positions they had to solve for through symmetry (if you scramble a Cube randomly and then turn it upside down, you haven't changed the solution).

They then borrowed some computing time from Google (one of the principals is an engineer there) and burned about 35 core-years to solve all the possible positions. The number 20 has been the lower limit for God's Number for more than a decade, but the team was finally able to whittle away at the upper limit (which was trimmed back to 22 in 2008).

So far the algorithm has identified some 12 million distance-20 positions, though there are definitely many more than that. Click on this link if you want to see what some of the hardest positions are, and how they exactly tackled this problem.

Source: PopSci

An Oxford University study suggests that people living in countries with 'free market' regimes are more likely to become obese due to the stress of being exposed to economic insecurity.

The researchers believe that the stress of living in a competitive social system without a strong welfare state could be causing people to overeat. According to the study published in the latest issue of the journal Economics and Human Biology, Americans and Britons are much more likely to be obese than Norwegians and Swedes.

Oxford researchers compared 11 affluent countries and found that those with a liberal market regime (strong market incentives and relatively weak welfare states) experienced one-third more obesity on average. Their analysis of nearly 100 surveys, carried out between 1994 and 2004, revealed that the highest prevalence of obesity reported in a single survey was in the United States where one-third of the population was classed as obese. By contrast, Norway had the lowest prevalence of obesity in a single survey at just five per cent.

The study compared 'market-liberal' countries (United States, Britain, Canada and Australia) with seven relatively affluent European countries that have systems that traditionally offer stronger social protection (Finland, France, Germany, Italy, Norway, Spain and Sweden). It concludes that economic security plays a significant role in determining levels of obesity. Countries with higher levels of job and income security were associated with lower levels of obesity.

In the past, the rise of obesity in affluent societies has frequently been attributed to the ready supply of cheap, accessible, high-energy, pre-processed food in fast food outlets and supermarkets. This cause is known by researchers as the 'fast food shock'. Oxford researchers measured the impact of fast food by using a price index, constructed by The Economist magazine*, showing the international variation in the cost of the McDonald's Big Mac hamburger. They found that the availability of fast food may not be as significant as previously thought, as they calculated it had half as much an effect on the prevalence of obesity as the effects of economic insecurity.

Lead author Professor Avner Offer, Chichele Professor of Economic History at the University of Oxford, said: 'Policies to reduce levels of obesity tend to focus on encouraging people to look after themselves but this study suggests that obesity has larger social causes. The onset and increase of large-scale obesity began during the 1980s, and coincided with the rise of market-liberalism in the English-speaking countries.

'It may be that the economic benefits of flexible and open markets come at a price to personal and public health which is rarely taken into account. Basically, our hypothesis is that market-liberal reforms have stimulated competition in both the work environment and in what we consume, and this has undermined personal stability and security.'

The Oxford research team based this study on observations in academic literature about animal behaviour. Animals, both in captivity and in the wild, have been found to increase their food intake when they are faced with uncertainty about their future food supply.

These latest findings suggest that obesity in affluent societies is a response to the stress of economic insecurity. The researchers found that the effects of economic security were considerably greater in causing obesity than other factors measured (the existence of a market-liberal regime; inequality, the price of fast food, and the passage of time).

'Obesity under affluence varies by welfare regimes: The effect of fast food, insecurity, and inequality' is by Avner Offer, Rachel Pechey and Stanley Ulijaszek.

Source: ScienceDaily

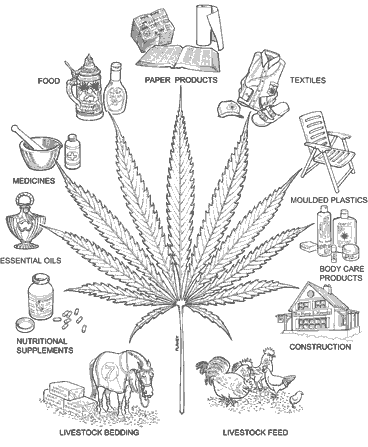

Marijuana plants are either male or female . The male Marijuana plants produce pollen which pollinates the flowers of the female Marijuana plant, which once pollenized, produce seeds . If the female Marijuana plant isn't pollenized (if there are no male Mariuana plants nearby producing pollen), the flower/buds continue to develop and produce THC. Female Marijuana plants which are not pollenized are referred to as sinsemilla (without seeds). Usually 30-50% of the Marijuana plants are male.

What's the Difference you ask?

Males are often, but not always, tall with stout stems , sporadic branching and few leaves. Males are usually harvested except those used for breeding, after their sex has been determined, but before the pollen is shed. When harvesting, especially if close to females, cut the Marijuana plant off at the base, taking care to shake the male as little as possible. This helps prevent any accidental pollination by an unnoticed, open male flower.

When a male enters the stage of flower development, the tips of the branches where a bud would develop will start to grow what looks like a little bud (little balls) but it will have no white hairs coming out of it. Females will have no balls and will have small white hairs. Read More about Male marijuana plants.

Male marijuana plant

Cannabis in temperate climates begin to show his sexual identity by the end of July (end of January in the southern hemisphere) in different dates according to the varieties, Marijuana being the resinous flower of female cannabis plants intended for seed production, in absence of pollen buds turns out pure sensimilla weed and is gentle and sweet to smoke.

It is very important to get rid of male plants on time, as they are unwanted pollen carriers. By the early flowering stage male cannabis, if compared to female's, shows quite a different structure but the characteristic excrescencies would be the sex indicator this are called primordia and will emerge by the side of the third or fourth internodes in the main stem.

Female cannabis are completely revealed when the characteristic "V" shaped pistils become visible, all this to a close observation. Outdoors males will uncover themselves approximately three weeks before the females, indoors sexing of both males and females happens within a week to ten days according to the variety.

Female marijuana plant

We've heard of urban legends about environmental conditions, age of seeds, added chemicals and even lunar stages having an influence on sexual differentiation of Cannabis; you might take note of those suggestions as personal communications, but a good handbook or an internet surf works the best if you lack in experience when sexing.

What is a Hermaphrodite plant?

An hermaphrodite, or hermie, is a Marijuana plant of one sex that develops the sexual organs of the other sex. Most commonly, a flowering female Marijuana plant will develop staminate flowers, though the reverse is also true. Primarily male hermaphrodites are not as well recognized only because few growers let their males reach a point of flowering where the pistillate would be expressed.

Hermaphrodites are generally viewed with disfavor. First, they will release pollen and ruin a sinsemelia crop, pollinating themselves and all of the other females in the room. Second, the resulting seeds are worthless, because hermaphrodite parents tend to pass on the tendency to their offspring.

Please note that occassionally specious staminate flowers will appear in the last days of flowering of a female Marijuana plant. These do not drop pollen and their appearance is not considered evidence of deleterious hermaphroditism.

Hermaphrodite marijuana plant

Here's an image of a hermaphrodite, specifically a female Marijuana plant with staminate flowers.

Source: amsterdammarijuanaseedbank.com

Researchers at the National Institute of Standards and Technology (NIST) have for the first time used an apparatus that relies on the "noise" of jiggling electrons to make highly accurate measurements of the Boltzmann constant, an important value for many scientific calculations. The technique is simpler and more compact than other methods for measuring the constant and could advance international efforts to revamp the worlds scientific measurement system.

The Boltzmann constant relates energy to temperature for individual particles such as atoms. The currently accepted value of the Boltzmann Constant is 1.380 6504 x 10-23 joules/kelvin. The accepted value of this constant is based mainly on a 1988 NIST measurement performed using acoustic gas thermometry, with a relative standard uncertainty of less than 2 parts per million (ppm). The technique is highly accurate but the experiment is complex and difficult to perform. To assure that the Boltzmann constant can be determined accurately around the world, scientists have been trying to develop different methods that can reproduce this value with comparable uncertainty.

The latest NIST experiment used an electronic technique called Johnson noise thermometry (JNT) to measure the Boltzmann constant with an uncertainty of 12 ppm. The results are consistent with the currently recommended value for this constant. NIST researchers aim to make additional JNT measurements with improved uncertainties of 5 ppm or less, a level of precision that would help update crucial underpinnings of science, including the definition of the Kelvin, the international unit of temperature.

The international metrology community is expected to soon fix the value of the Boltzmann constant, which would then redefine the Kelvin as part of a larger effort to link all units to fundamental constants. This approach would be the most stable and universal way to define measurement units, in contrast to traditional measurement unit standards based on physical objects or substances. The Kelvin is now defined in terms of the triple-point temperature of water (273.16 K, or about 0 degrees C and 32 degrees F), or the temperature and pressure at which waters solid, liquid and vapor forms coexist in balance. This value may vary slightly depending on chemical impurities.

The NIST JNT system measures very small electrical noise in resistors, a common electronic component, when they are cooled to the water triple point temperature. This Johnson noise is created by the random motion of electrons, and the signals they generate are directly proportional to temperature. The electronic devices measuring the noise power are calibrated with electrical signals synthesized by a superconducting voltage source based on fundamental principles of quantum mechanics. This unique feature enables the JNT system to match electrical power and thermal-noise power at the triple point of water, and assures that copies of the system will produce identical results. NIST researchers recently improved the apparatus to reduce the statistical uncertainty, systematic errors and electromagnetic interference. Additional improvements in the electronics are expected to further reduce measurement uncertainties.

The new measurements were made in collaboration with guest researchers from the Politecnico di Torino, Italy; the National Institute of Metrology, China; the University of Twente, The Netherlands; the National Metrology Institute of Japan, Tsukuba, Japan; and the Measurement Standards Laboratory, New Zealand.

Source: PhysOrg

More information: S.P. Benz, et al. An electronic measurement of the Boltzmann Constant. Metrologia. Published online March 30, 2011.

Provided by National Institute of Standards and Technology

For some years now, NASA has been using what are called thermoelectric materials to power its space probes. The probes travel such great distances from our sun that solar panels are no longer an efficient source of power. So NASA imbeds a nuclear material in a radioisotope thermal generator, where it decays, producing heat energy. That energy is then converted by thermoelectric materials into the electricity that powers the space probe. The same technology is now being explored for more earthly applications, for example, to capture heat lost in the exhaust of automobiles to produce electricity for the vehicle.

Thermoelectric materials are a hot new technology that is now being studied intensively by researchers funded by the U.S. Department of Energy's Energy Frontier Research Centers. Oliver Delaire, a Shull fellow at ORNL, is part of such a collaboration, this one led by an EFRC at the Massachusetts Institute of Technology. Delaire uses neutron scattering and computer simulation to investigate the microscopic structure and dynamics of thermoelectric materials so that researchers can make them more efficient for new, energy-saving applications.

Thermoelectrics are adaptable for both heating and for cooling applications. These materials can convert low-grade heat that is wasted in an industrial process, or in the exhaust system of a vehicle, into electricity. Or they can transport heat from an external source of power and manipulate it to cool a surface.

But there are limitations in the materials themselves. "Right now the materials may be on the order of 10 percent efficiency," Delaire said. "If we can make them two or three times better, if we can get 30% efficiency, that would get people very excited, and it would be much more viable economically."

The researchers want to improve their understanding of phonons, the atomic vibrations that transport heat through thermoelectric materials. "Neutron scattering is unmatched in its ability to probe the atomic vibrations in the crystals," Delaire said. "This is one of the fundamental blocks in the process that we need to understand better."

At ORNL, Delaire and his collaborators are using the Time of Flight spectrometers at SNS-ARCS and CNCS-and the HB-3 Triple-Axis Spectrometer at the High Flux Isotope Reactor.

"The SNS instruments offer more power," Delaire said. "They can sample the whole parameter space very efficiently. They offer a unique opportunity in a single experiment to sample all the types of atomic vibrations, all the phonons inside the solid. And once we have this information, we can reconstruct the microscopic thermoconductivity, which is really the property we are trying to understand."

The single crystals are grown at ORNL. There are two high-temperature materials, PbTe (lead telluride) and La3Te4 (lanthanum telluride) for heat recovery and FeSi (iron silicide) for refrigeration applications. Single crystals offer advantages in neutron science. They can be measured on the TOF instruments at a series of orientations, each orientation giving a comprehensive data set. Combining these sets gives the researchers a complete picture, much like tomography.

"By doing this experiment using the high neutron flux here at SNS we are able to really map out the space, and that is really where the big advance is," Delaire said. "Previously we were only able to look at a few points in the space, and that is like looking with a flashlight. With the flux we have at SNS, we turn on the overhead lights and see the whole room all at once."

Once they have a view of the volume of data, the researchers then use the triple-axis spectrometer at HFIR to zero in and look at pinpoints of phonon vibrations in that region. Next, ab initio computational methods simulate the data that the instruments have generated. These simulations are based on quantum mechanics, which gives the researchers a direct comparison between the neutron science measurements and fundamental theory and theoretical predictions.

In 2010, the researchers uncovered an important coupling between thermal disorder and the electronic structure of FeSi. This type of coupling between thermal disorder and electronic structure at finite temperature could prove to be important in many materials, including thermoelectrics. The research involves a dozen investigators, including Delaire and David Singh, a solid-state theorist in materials science at ORNL. Samples are also contributed by researchers at Boston College. Delaire, Singh and some of the MIT researchers are involved in the computation.

Source: PhysOrg

More information: O. Delaire , et al. Phonon softening and metallization of a narrow-gap semiconductor by thermal disorder. Proceedings of the National Academy of Sciences of the United States of America. PNAS March 22, 2011 vol. 108 no. 12 4725-4730

Brian C. Sales, et al. "Thermoelectric properties of FeSi and Related Alloys: Evidence for Strong Electron-Phonon Coupling," PACS: 72.20.Pa, 63.20.Kd. Accepted in Phys. Rev. B.

Provided by Oak Ridge National Laboratory

As part of the quest to form perfectly smooth single-molecule layers of materials for advanced energy, electronic, and medical devices, researchers at the U.S. Department of Energy's Brookhaven National Laboratory have discovered that the molecules in thin films remain frozen at a temperature where the bulk material is molten. Thin molecular films have a range of applications extending from organic solar cells to biosensors, and understanding the fundamental aspects of these films could lead to improved devices.

The study, which appears in the April 1, 2011, edition of Physical Review Letters, is the first to directly observe "surface freezing" at the buried interface between bulk liquids and solid surfaces.

"In most materials, you expect that the surface will start to disorder and eventually melt at a temperature where the bulk remains solid," said Brookhaven physicist Ben Ocko, who collaborated on the research with scientists from the European Synchrotron Radiation Facility (ESRF), in France, and Bar-Ilan University, in Israel. "This is because the molecules on the outside are less confined than those packed in the deeper layers and much more able to move around. But surface freezing contradicts this basic idea. In surface freezing, the interfacial layers freeze before the bulk."

In the early 1990s, two independent teams (one at Brookhaven) made the first observation of surface freezing at the vapor interface of bulk alkanes, organic molecules similar to those in candle wax that contain only carbon and hydrogen atoms. Surface freezing has since been observed in a range of simple chain molecules and at various interfaces between them.

"The mechanics of surface freezing are still a mystery," said Bar Ilan scientist Moshe Deutsch. "It's puzzling why alkanes and their derivatives show this unusual effect, while virtually all other materials exhibit the opposite, surface melting, effect."

In the most recent study, the researchers discovered that surface freezing also occurs at the interface between a liquid and a solid surface. In a temperature-controlled environment at Brookhaven's National Synchrotron Light Source and the ESRF, the group made contact between a piece of highly polished sapphire and a puddle of liquid alkanol — a long-chain alcohol. The researchers shot a beam of high-intensity x-rays through the interface and by measuring how the x-rays reflected off the sample, the group revealed that the alkanol molecules at the sapphire surface behave very differently from those in the bulk liquid.

According to ESRF scientist Diego Pontoni, "Surprisingly, the alkanol molecules form a perfect frozen monolayer at the sapphire interface at temperatures where the bulk is still liquid." At sufficiently high temperatures, about 30 degrees Celsius above the melting temperature of the bulk alkanol, the monolayer also melts.

The temperature range over which this frozen monolayer exists is about 10 times greater than what's observed at the liquid-vapor interfaces of similar materials. According to Alexei Tkachenko, a theoretical physicist who works at Brookhaven's Center for Functional Nanomaterials , "The temperature range of the surface-frozen layer and its temperature-dependent thickness can be described by a very simple model that we developed. What is remarkable is that the surface layer does not freeze abruptly as in the case of ice, or any other crystal. Rather, a smooth transition occurs over a temperature range of several degrees."

Said Ocko, "These films are better ordered and smoother than all other organic monolayer films created to date."

Moshe Deutsch added, "The results of this study and the theoretical framework which it provides may lead to new ideas on how to make defect-free, single molecule-thick films."

Source: physorg

Provided by Brookhaven National Laboratory

'Cell surgery' using nano-beams.

Fig. 1: RIKEN’s old accelerator, which had been barely holding on to life, was brought back to full operation by the nano-beam project (from right: Walter Meissl, Naoko Imamoto, Yasunori Yamazaki).

From a nucleus to mitochondria, lysosomes and the nuclear pore complex, every animal cell contains a range of organelles within just 1–100 micrometers of space. How might cell functions change if one of these organelles becomes damaged? Despite rapid progress in molecular biology research, such experiments have yet to be fully developed because organelles are too small and fragile to be manipulated individually.

Physicist Walter Meissl has been trying to solve this problem by developing an ultra-narrow ion beam that can pinpoint a single organelle while leaving the surrounding cellular functions intact. Since joining RIKEN in March 2009, the Austrian postdoctoral fellow has succeeded in hitting a nucleus with the ‘nano-beam’, and is now preparing for his ultimate target: a centrosome, one of the smallest organelles. “If a nucleus was like a soccer ball, a centrosome would be only one little point on that ball,” Meissl says.

Creating nano-beams using glass capillaries

Meissl is a central figure of the nano-beam project, which was originally established with a grant from the President’s Fund for fiscal 2007 and 2008. The project was initiated by Yasunori Yamazaki, chief scientist of the Atomic Physics Laboratory at the RIKEN Advanced Science Institute, in collaboration with Naoko Imamoto, chief scientist of the Cellular Dynamics Laboratory at the same institute (Fig. 1). Although the grant ended in March 2009, the researchers still continue to work together in an effort to implement what they call ‘cell surgery’.

Previously from the Vienna University of Technology in Austria, Meissl first heard about the project when Yamazaki gave a talk at his institute in 2008. “I was intrigued with the idea of getting into the interdisciplinary work between physics and biology,” says Meissl, who then decided to join Yamazaki’s laboratory.

Meissl’s work is a small but highly innovative product of Yamazaki’s lab, the main focus of which is the investigation of exotic collision products such as antihydrogen atoms and the development of advanced cooling techniques to capture these particles. The lab has also been developing slow, highly charged ion beams (the aggregation of charged atoms and molecules) with nanometer-scale diameters using glass capillaries. Yamazaki says it is extremely difficult to focus highly charged ions into nano-sized spots, and other groups around the world have been attempting to do so using dedicated lenses that combine the effects of electric and magnetic fields. No-one had thought of using glass, because it does not conduct electricity and is susceptible to the build-up of static electricity, which deteriorates the quality of the ion beam, he says.

Instead, Yamazaki took advantage of glass’s insulation properties. When ions are first injected into the inlet of a glass capillary, they accumulate on the capillary’s inner wall; when the accumulation of ion charge on the inner walls becomes sufficient, subsequently injected ions are naturally guided all the way to the outlet. At a cost of 50 yen (US$0.40) per capillary, “it was so simple, like a joke, but we could confirm the beams were strong enough,” says Yamazaki.

A tweak for biological applications

Nano-beams can be used to manipulate molecules and atoms on surfaces, so demand is growing for their use in the fabrication of semiconductor materials. But Yamazaki wanted to use the technology for unconventional purposes, and sought ideas at one of RIKEN’s informal chief scientist meetings, at which chief scientists with various backgrounds come together to learn about each other’s activities. Yamazaki became intrigued with the potential biological applications of his nano-beams, and suggested a collaboration with Imamoto, whose primary area of study is in the regulation and maintenance of nuclear function. “But at first, my idea was rebuffed,” Yamazaki says, “because the beams can only be produced in a vacuum chamber, and cells die without air.”

Fig. 2: A thin glass cap at the tip of the glass capillary makes it possible to apply the nano-beam in biological experiments.

Yet Yamazaki was undaunted and hit upon the idea of adding a thin glass cap at the capillary outlet so it can be immersed in a liquid while maintaining the capillary vacuum (Fig. 2). The beam can be controlled so that it travels only 100 nanometers to several micrometers and the ions have enough energy to penetrate the window, allowing it to be used to irradiate a single organelle, or even a part of one, Yamazaki says. Another advantage of glass is that it is transparent and thus enables researchers to observe the irradiation point directly using an optical microscope. Compared to conventional ionizing radiation, which does not have the precision or selectivity of the nano-beam, "the new beam could lead us to observe more precisely how the damage to an organelle affects overall cell dynamics,” Imamoto says.

A physicist learns how to culture cells

Yoshio Iwai, a postdoctoral researcher who recently left the laboratory, spent the first 18 months of the project constructing a dedicated beam line for a small tandem accelerator at RIKEN. Now, much of the work has been handed over to Meissl. Unlikely for a physicist, he started with learning from Imamoto how to culture a cell, multiply it, and prepare solutions for experiments. “It is very exciting and totally different from my previous work because a living cell shows unpredictable results, a drastic change from surface physics,” Meissl says.

Meissl also made some additional changes to the beamlines. “Usually in physics, you fight for more ions, more intensity. But for biological experiments, we need as little radiation as possible because even a single particle can harm a cell.” He installed a fast beam switch that allows attenuation of the ion beam down to short packets containing as little as a single ion.

From nucleus to centrosome

The most difficult part of the experiments is manually setting up the cell in the best position for nano-beam irradiation, Meissl says (Fig. 3). An equally important step is to optimize the strength of the ion beam and calculate the right irradiation time. By switching the beam on and off in less than one microsecond, Meissl takes multiple shots at the cell surface, but each time he obtains different results. “I need much more target practice,” he says. “It’s not as easy an experiment as it looks. A lot of patience is required,” Imamoto adds.

Fig. 3: Meissl manually prepares the cell and sets up the incident direction of the ion beam.

In the summer of 2009, Meissl succeeded in hitting a nucleus, and the cell died immediately. After a number of attempts, he has fine-tuned the strength of the beam and is now able to hit the nucleus while keeping the cell alive. He is now preparing to target a centrosome. At less than one micrometer in diameter, the centrosome exists as a pair of organelles floating near the nucleus, and organizes microtubules to divide chromosomes into daughter cells during cell division. Yamazaki and Imamoto are curious to see what will happen if one of the pair is damaged, but Meissl says it is incomparably more difficult than targeting a nucleus.

Fig. 4: The nano-beam has been successfully used to selectively damage a nucleus (fluorescent green) in a cancer cell.

“The project is just becoming science,” Yamazaki says. “We have just begun to explore the potential of this new technique that can lead to unprecedented applications bridging biology and physics.”

Source: PhysOrg

References

1. T. Ikeda, Y. Kanai, T. M. Kojima, Y. Iwai, T. Kambara, M. Hoshino, T. Nebiki, T. Narusawa & Y. Yamazaki. Production of a microbeam of slow highly charged ions with a tapered glass capillary. Applied Physics Letters 89, 163502 (2006). article

2. Y. Iwai, T. Ikeda, T. M. Kojima, Y. Yamazaki, K. Maeshima, N. Imamoto, T. Kobayashi, T. Nebiki, T. Narusawa & G. P. Pokhil. Ion irradiation in liquid of μm3 region for cell surgery." Applied Physics Letters 92, 023509 (2008). article

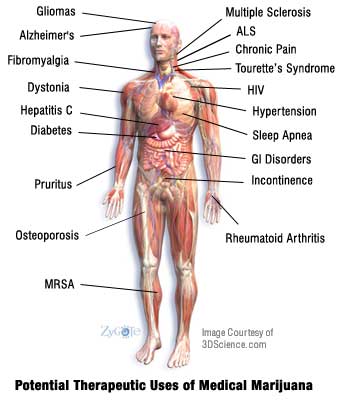

The active ingredient in marijuana cuts tumor growth in common lung cancer in half and significantly reduces the ability of the cancer to spread, say researchers at Harvard University who tested the chemical in both lab and mouse studies.

They say this is the first set of experiments to show that the compound, Delta-tetrahydrocannabinol (THC), inhibits EGF-induced growth and migration in epidermal growth factor receptor (EGFR) expressing non-small cell lung cancer cell lines. Lung cancers that over-express EGFR are usually highly aggressive and resistant to chemotherapy.

THC that targets cannabinoid receptors CB1 and CB2 is similar in function to endocannabinoids, which are cannabinoids that are naturally produced in the body and activate these receptors. The researchers suggest that THC or other designer agents that activate these receptors might be used in a targeted fashion to treat lung cancer.

"The beauty of this study is that we are showing that a substance of abuse, if used prudently, may offer a new road to therapy against lung cancer," said Anju Preet, Ph.D., a researcher in the Division of Experimental Medicine.

Acting through cannabinoid receptors CB1 and CB2, endocannabinoids (as well as THC) are thought to play a role in variety of biological functions, including pain and anxiety control, and inflammation. Although a medical derivative of THC, known as Marinol, has been approved for use as an appetite stimulant for cancer patients, and a small number of U.S. states allow use of medical marijuana to treat the same side effect, few studies have shown that THC might have anti-tumor activity, Preet says. The only clinical trial testing THC as a treatment against cancer growth was a recently completed British pilot study in human glioblastoma.

In the present study, the researchers first demonstrated that two different lung cancer cell lines as well as patient lung tumor samples express CB1 and CB2, and that non-toxic doses of THC inhibited growth and spread in the cell lines. "When the cells are pretreated with THC, they have less EGFR stimulated invasion as measured by various in-vitro assays," Preet said.

Then, for three weeks, researchers injected standard doses of THC into mice that had been implanted with human lung cancer cells, and found that tumors were reduced in size and weight by about 50 percent in treated animals compared to a control group. There was also about a 60 percent reduction in cancer lesions on the lungs in these mice as well as a significant reduction in protein markers associated with cancer progression, Preet says.

Although the researchers do not know why THC inhibits tumor growth, they say the substance could be activating molecules that arrest the cell cycle. They speculate that THC may also interfere with angiogenesis and vascularization, which promotes cancer growth.

Preet says much work is needed to clarify the pathway by which THC functions, and cautions that some animal studies have shown that THC can stimulate some cancers. "THC offers some promise, but we have a long way to go before we know what its potential is," she said.

Source: The above story is reprinted (with editorial adaptations by ScienceDaily staff) from materials provided by American Association for Cancer Research

Scientists from the Morgridge Institute for Research, the University of Wisconsin-Madison, the University of California and the WiCell Research Institute moved gene therapy one step closer to clinical reality by determining that the process of correcting a genetic defect does not substantially increase the number of potentially cancer-causing mutations in induced pluripotent stem cells.

Their work, scheduled for publication the week of April 4 in the online edition of the journal Proceedings of the National Academy of Sciences and funded by a Wynn-Gund Translational Award from the Foundation Fighting Blindness, suggests that human induced pluripotent stem cells altered to correct a genetic defect may be cultured into subsequent generations of cells that remain free of the initial disease. However, although the gene correction itself does not increase the instability or the number of observed mutations in the cells, the study reinforced other recent findings that induced pluripotent stem cells themselves carry a significant number of genetic mutations.

"This study showed that the process of gene correction is compatible with therapeutic use," says Sara Howden, primary author of the study, who serves as a postdoctoral research associate in James Thomson's lab at the Morgridge Institute for Research. "It also was the first to demonstrate that correction of a defective gene in patient-derived cells via homologous recombination is possible."

Like human embryonic stem cells, induced pluripotent stem cells can become any of the 220 mature cell types in the human body. Induced pluripotent stem cells are created when skin or other mature cells are reprogrammed to a pluripotent state through exposure to select combinations of genes or proteins.

Since they can be derived from a patient's own cells, induced pluripotent stem cells may offer some clinical advantages over human embryonic stem cells by avoiding problems with rejection. However, scientists are still working to understand subtle differences between human embryonic and induced pluripotent stem cells, including a higher rate of genetic mutations among the induced pluripotent cells and evidence that the cells may retain some "memory" of their previous lineage.

Gene therapy using induced pluripotent stem cells holds promise for treating many inherited and acquired diseases such as Huntington's disease, degenerative retinal disease or diabetes. The patient in this study suffers from a degenerative eye disease known as gyrate atrophy, which is characterized by progressive loss of visual acuity and night vision leading to eventual blindness.

While diseases such as genetic retinal disorders and diabetes offer attractive targets for induced pluripotent stem cell-based transplant therapies, concerns have been raised over the commonly occurring mutations in the cells and their potential to become cancerous.

Howden says that because gene targeting to correct specific genetic defects typically requires an extended culture period beyond initial induced pluripotent stem cell generation, researchers have been interested to learn whether the process would increase the number of mutations in the cells. The team set out to determine if it was possible to correct defects without introducing a level of mutations that would be incompatible with clinical applications.

In the study, the researchers used a technique called episomal reprogramming to generate the induced pluripotent stem cells. In contrast to techniques that use retroviruses, episomal reprogramming doesn't involve inserting DNA into the genome. This technique allowed them to produce cells that were free of potentially harmful transgene sequences.

The scientists then corrected the actual retinal disease-causing gene defect using a technique called homologous recombination. The stem cells were extensively "characterized" or studied before and after the process to assess whether they developed significant additional mutations or variations. The results showed that the culture conditions required to correct a genetic defect did not substantially increase the number of mutations.

"By showing that the process of correcting a genetic defect in patient-derived induced pluripotent cells is compatible with therapeutic use, we eliminated one barrier to gene therapy based on these cells," Howden says. "There is still much work to be done."

David Gamm, an author of the study and an assistant professor with the Department of Ophthalmology and the Waisman Center Stem Cell Research Program, says the ability to correct gene defects in a patient's own induced pluripotent stem cells should increase the appeal of stem cell technology to researchers striving to improve vision in patients with inherited blinding disorders.

"Although further development certainly is needed before such techniques may reach the clinical trial stage, our findings offer reason for continued hope," Gamm says. "Dr. Howden and our collaborative group have overcome an important hurdle which, when considered in the context of other recent developments, may lead to personalized stem cell therapies that benefit people with genetic visual disorders."

In addition to primary author Howden, who holds joint appointments with the Morgridge Institute for Research, the Department of Cell and Regenerative Biology and the Genome Center of Wisconsin, co-authors of the study included: Thomson, who in addition holds an appointment with the Department of Molecular, Cellular & Developmental Biology, University of California-Santa Barbara; Gamm, who holds joint appointments with the UW-Madison School of Medicine and Public Health's Department of Ophthalmology and Visual Sciences and the Waisman Center Stem Cell Research Program; Jeff Nie, Goukai Chen, Brian McIntosh, Daniel Gulbranson, Nicole Diol and David Vereide with the Morgridge Institute for Research; Athurva Gore, Zhe Li, Ho-Lim Fung and Kun Zhang, of the Department of Bioengineering at the University of California-San Diego; and Benjamin Nisler, Seth Taapken and Karen Dyer Montgomery of WiCell Research Institute.

Source: ScienceDaily

|